Asia Pacific Academy of Science Pte. Ltd. (APACSCI) specializes in international journal publishing. APACSCI adopts the open access publishing model and provides an important communication bridge for academic groups whose interest fields include engineering, technology, medicine, computer, mathematics, agriculture and forestry, and environment.

Adaptive Feature Fusion-Enhanced Vision Transformer Autoencoder for Realistic Image Inpainting

Vol 7, Issue 1, 2026

Download PDF

Abstract

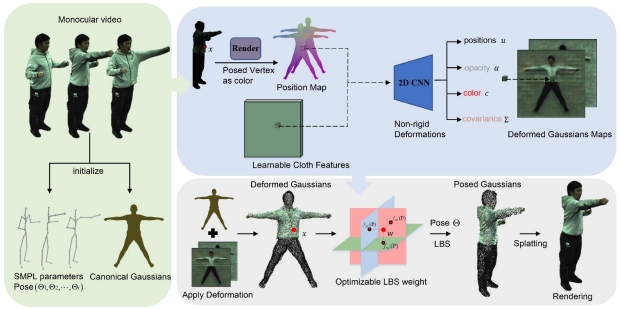

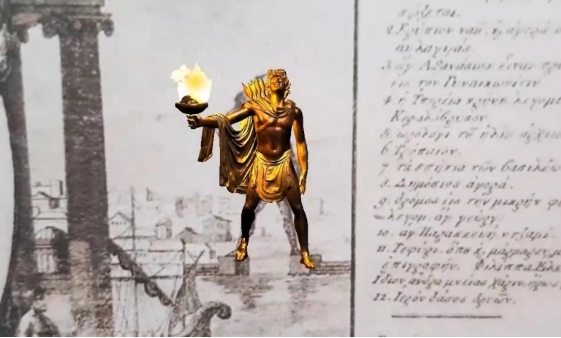

Image inpainting restores missing or corrupted image regions using contextual information from surrounding pixels. In the Metaverse integration of Virtual Reality (VR), Augmented Reality (AR), and Artificial Intelligence (AI)-realism and visual continuity are vital for immersive user experiences. This paper presents Vi-Trans, a Vision Transformer-based autoencoder for high-quality image inpainting. The model divides images into non-overlapping patches and reconstructs masked regions using global contextual learning through multi-head self-attention and feed-forward layers. To enhance structural integrity and edge preservation, a novel Adaptive Feature Fusion (AFF) module is introduced to fuse global transformer representations with local encoder features through an attention-weighted mechanism. This dynamic fusion balances semantic understanding with fine-grained spatial details, improving visual consistency. Experimental evaluations on the CelebA dataset demonstrate that Vi-Trans with AFF outperforms existing transformer-based inpainting methods in PSNR, SSIM, FID, and LPIPS metrics.

Keywords

References

1. Bertalmio M, Sapiro G, Caselles V, et al. Image inpainting. In: Proceedings of the 27th Annual Conference on Computer Graphics and Interactive Techniques; July 2000; pp. 417–424. doi:10.1145/344779.344972.

2. Al-Jaberi AK, Hameed EM. A review of PDE-based local inpainting methods. Journal of Physics: Conference Series. 2021, 1818: 012149. doi:10.1088/1742-6596/1818/1/012149.

3. Jini P, Rajkumar KK. Image inpainting using image interpolation – an analysis. Revista Geintec-Gestão InovaçãoeTecnologias. 2021, 11(2): 1906–1920. doi:10.47059/revistageintec.v11i2.1807.

4. Jini P, Rajkumar KK. Application of Image Inpainting in Drug and Alcohol Addiction Research Using Repeated Convolution Method. Journal of Drug and Alcohol Research. 2024, 13: 236413. doi:10.4303/JDAR/236413.

5. Wang Y, Song B, Zhang Z. An image inpainting method based on generative adversarial networks inversion and autoencoder. IET Image Processing. 2024, 18(4): 1042–1052. doi:10.1049/iet-ipr.2023.0348.

6. Zhang, L., Yu, Y., Yao, J., & Fan, H. (2025). High-fidelity image inpainting with multimodal guided GAN inversion. International Journal of Computer Vision, 133(8), 5788-5805.

7. Deng Y, Hui S, Zhou S, et al. T-former: An efficient transformer for image inpainting. In: Proceedings of the 30th ACM International Conference on Multimedia (MM ’22); October 2022; doi:10.1145/3503161.3548446.

8. Miao W, Wang L, Lu H, et al. ITrans: Generative image inpainting with transformers. Multimedia Systems. 2024, 30(1): 21. doi:10.1007/s00530-023-01211-w.

9. Xu Y, Lyu Y, Xiong G, et al. Adaptive feature fusion networks for origin-destination passenger flow prediction in metro systems. IEEE Transactions on Intelligent Transportation Systems. 2023, 24(5): 5296–5312. doi:10.1109/TITS.2023.3239101.

10. Buhalis D, Leung D, Lin M. Metaverse as a disruptive technology revolutionising tourism management and marketing. Tourism Management. 2023, 97: 104724. doi:10.1016/j.tourman.2023.104724.

11. Enamorado-Díaz E, García-García JA, Escalona-Cuaresma MJ, et al. Metaverse applications: Challenges, limitations and opportunities — A systematic literature review. Information and Software Technology. 2025, 182: 107701. doi:10.1016/j.infsof.2025.107701.

12. Sun J, Gan W, Chao HC, et al. Metaverse: Survey, applications, security, and opportunities. arXiv preprint arXiv:2210.07990. 2022. doi:10.48550/arXiv.2210.07990.

13. Criminisi A, Pérez P, Toyama K. Region filling and object removal by exemplar-based image inpainting. IEEE Transactions on Image Processing. 2004, 13(9): 1200–1212. doi:10.1109/TIP.2004.833105.

14. Hays J, Efros AA. Scene completion using millions of photographs. ACM Transactions on Graphics. 2007, 26(3): 4. doi:10.1145/1276377.1276382.

15. Barnes C, Shechtman E, Finkelstein A, et al. PatchMatch: A randomized correspondence algorithm for structural image editing. ACM Transactions on Graphics. 2009, 28(3): 24. doi:10.1145/1531326.1531330.

16. Pathak D, Krähenbühl P, Donahue J, et al. Context Encoders: Feature learning by inpainting. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR); 2016; pp. 2536–2544. doi:10.1109/CVPR.2016.278.

17. Lizuka S, Simo-Serra E, Ishikawa H. Globally and locally consistent image completion. ACM Transactions on Graphics. 2017, 36(4): 107. doi:10.1145/3072959.3073659.

18. Liu, H., Jiang, B., Song, Y., Huang, W., & Yang, C. (2020).Rethinking image inpainting via a mutual encoder–decoder with feature equalizations. In Computer Vision – ECCV 2020 (European Conference on Computer Vision), Lecture Notes in Computer Science, vol. 12347, pp. 725–741. Springer.DOI: https://doi.org/10.1007/978-3-030-58536-5_43.

19. Shamsolmoali P, Zareapoor M, Granger E. TransInpaint: Transformer-based image inpainting with context adaptation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV); 2023; pp. 849–858. doi:10.1109/ICCV.2023.00080.

20. Liu G, Reda FA, Shih KJ, et al. Image inpainting for irregular holes using partial convolutions. In: Proceedings of the European Conference on Computer Vision (ECCV); 2018; pp. 85–100. doi:10.1007/978-3-030-01252-6_6.

21. Yu J, Lin Z, Yang J, et al. Free-form image inpainting with gated convolution. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV); 2019; pp. 4471–4480. doi:10.1109/ICCV.2019.00457.

22. Yu F, Koltun V. Multi-scale context aggregation by dilated convolutions. arXiv preprint arXiv:1511.07122. 2015. doi:10.48550/arXiv.1511.07122.

23. Kumar V, Singh RS, Dua Y. Morphologically dilated convolutional neural network for hyperspectral image classification. Signal Processing: Image Communication. 2022, 101: 116549. doi:10.1016/j.image.2021.116549.

24. Braşoveanu AMP, Andonie R. Visualizing transformers for NLP: A brief survey. In: 2020 24th International Conference Information Visualisation (IV); September 2020; pp. 270–279. doi:10.1109/IV51561.2020.00051.

25. Chefer H, Gur S, Wolf L. Generic attention-model explainability for interpreting bi-modal and encoder–decoder transformers. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV); 2021; pp. 397–406. doi:10.1109/ICCV48922.2021.00045.

26. Liu Z, Lin Y, Cao Y, et al. Swin Transformer: Hierarchical Vision Transformer using shifted windows. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV); 2021; pp. 9992–10002. doi:10.1109/ICCV48922.2021.00986.

27. Chen Y, Xia R, Yang K, et al. DNNAM: Image inpainting algorithm via deep neural networks and attention mechanism. Applied Soft Computing. 2024, 154: 111392. doi:10.1016/j.asoc.2024.111392.

28. Carion N, Massa F, Synnaeve G, et al. End-to-end object detection with transformers. In: Proceedings of the European Conference on Computer Vision (ECCV); 2020; pp. 213–229. doi:10.1007/978-3-030-58452-8_13.

29. Csurka G, Volpi R, Chidlovskii B. Semantic image segmentation: Two decades of research. Foundations and Trends® in Computer Graphics and Vision. 2022, 14(1-2): 1–162. doi:10.1561/0600000095.

30. Vaswani A, Shazeer N, Parmar N, et al. Attention is all you need. In: Advances in Neural Information Processing Systems, NeurIPS 2017; 2017. doi:10.48550/arXiv.1706.03762.

31. Dosovitskiy A, Beyer L, Kolesnikov A, et al. An image is worth 16×16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929. 2020. doi:10.48550/arXiv.2010.11929.

32. Touvron H, Cord M, El-Nouby A, et al. Three things everyone should know about vision transformers. In: Proceedings of the European Conference on Computer Vision (ECCV); 2022; pp. 497–515. doi:10.1007/978-3-031-20053-3_29.

33. Liu R, Mi L, Chen Z. AFNet: Adaptive fusion network for remote sensing image semantic segmentation. IEEE Transactions on Geoscience and Remote Sensing. 2021, 59(9): 7871–7886. doi:10.1109/TGRS.2020.3034123.

34. Li Y, Chen W, Huang X, et al. MFVNet: A deep adaptive fusion network with multiple field-of-views for remote sensing image semantic segmentation. Science China Information Sciences. 2023, 66(4): 140305. doi:10.1007/s11432-022-3599-y.

35. Li W, Lin Z, Zhou K, et al. Mat: Mask-aware transformer for large hole image inpainting. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); 2022; pp. 10758–10768. doi:10.1109/CVPR52688.2022.01049.

36. Huang W, Deng Y, Hui S, et al. Sparse self-attention transformer for image inpainting. Pattern Recognition. 2024, 145: 109897. doi:10.1016/j.patcog.2023.109897.

37. Wang Z, Bovik AC. Mean squared error: Love it or leave it? A new look at signal fidelity measures. IEEE Signal Processing Magazine. 2009, 26(1): 98–117. doi:10.1109/MSP.2008.930649.

38. Lucas A, Lopez-Tapia S, Molina R, et al. Generative adversarial networks and perceptual losses for video super-resolution. IEEE Transactions on Image Processing. 2019, 28(7): 3312–3327. doi:10.1109/TIP.2019.2895768.

39. Rojas DJB, Fernandes BJT, Fernandes SMM. A review on image inpainting techniques and datasets. In: Proceedings of the 33rd SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI); 2020; pp. 240–247. doi:10.1109/SIBGRAPI51738.2020.00040.

40. Setiadi DRIM. PSNR vs SSIM: Imperceptibility quality assessment for image steganography. Multimedia Tools and Applications. 2021, 80(6): 8423–8444. doi:10.1007/s11042-020-10035-z.

41. Jayasumana S, Ramalingam S, Veit A, et al. Rethinking FID: Towards a better evaluation metric for image generation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); 2024; pp. 9307–9315. doi:10.1109/CVPR52733.2024.00889.

42. Chen Z, Wang X, Xie L, et al. LPIPS-AttnWav2Lip: Generic audio-driven lip synchronization for talking head generation in the wild. Speech Communication. 2024, 157: 103028. doi:10.1016/j.specom.2024.103028.

43. Wan, Z., Zhang, J., Chen, D., & Liao, J. (2021). High-fidelity pluralistic image completion with transformers. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 4692-4701).

Supporting Agencies

Copyright (c) 2026 Authors

This work is licensed under a Creative Commons Attribution 4.0 International License.

This site is licensed under a Creative Commons Attribution 4.0 International License (CC BY 4.0).

Prof. Zhigeng Pan

Professor, Hangzhou International Innovation Institute (H3I), Beihang University, China

Prof. Jianrong Tan

Academician, Chinese Academy of Engineering, China

Conference Time

December 15-18, 2025

Conference Venue

Hong Kong Convention and Exhibition Center (HKCEC)

...

Metaverse Scientist Forum No.3 was successfully held on April 22, 2025, from 19:00 to 20:30 (Beijing Time)...

We received the Scopus notification on April 19th, confirming that the journal has been successfully indexed by Scopus...

We are pleased to announce that we have updated the requirements for manuscript figures in the submission guidelines. Manuscripts submitted after April 15, 2025 are required to strictly adhere to the change. These updates are aimed at ensuring the highest quality of visual content in our publications and enhancing the overall readability and impact of your research. For more details, please find it in sumissions...

.jpg)

.jpg)